VishGuard AI — AI-Powered Voice Phishing Detection System

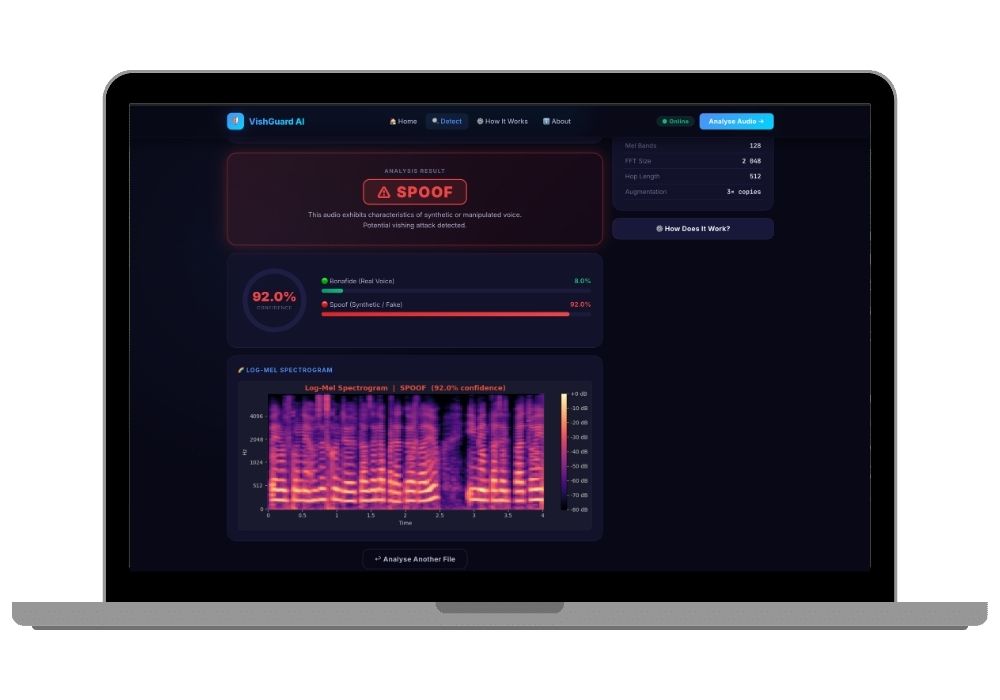

VishGuard AI detects fake or manipulated voice in vishing attacks using TensorFlow, Flask, and librosa. It uses CNN/CNN+LSTM on log-mel spectrograms and delivers real-time predictions via a web interface.

Technology Used

Python | TensorFlow | Keras | Flask | librosa | scikit-learn | NumPy | Matplotlib | Seaborn | Jupyter Notebook | HTML | CSS | JavaScript

Project Files

VishGuard AI - Voice Phishing Detection System

Voice phishing, commonly called vishing, is one of the fastest-growing social engineering threats today. Attackers use AI-generated voices to impersonate bank executives, government officials, or family members, and victims often cannot tell the difference. VishGuard AI is a final year project built to detect exactly these kinds of attacks using deep learning on audio signals.

The system trains two neural network architectures, a CNN and a CNN+LSTM, on 128-band log-mel spectrograms extracted from labelled audio files. After training, the best-performing model is automatically selected by F1 score and deployed through a Flask web application where users can upload audio or record directly in the browser and receive a real-time prediction.

If you are looking for a cybersecurity or AI final year project that goes beyond the usual classification demos, this one has enough depth to hold up during viva. You can explore more cybersecurity and AI projects on the cybersecurity projects and AI and ML projects pages.

What the Project Does

The project takes a raw audio file, resamples it to 16 kHz, trims silence, normalises the amplitude, and pads or truncates it to exactly four seconds. It then extracts a 128-band log-mel spectrogram from the processed audio and feeds it into the trained model. The model outputs a probability score for two classes: bonafide (genuine human voice) and spoof (synthetic or wavelet-manipulated fake voice). Results are displayed alongside a colour-coded spectrogram rendered directly in the browser.

Project Features

- Dual model training with automatic best-model selection by F1 score

- 128-band log-mel spectrogram feature extraction using librosa

- Three augmentation strategies applied to training data: Gaussian noise, time shift, and pitch shift

- Equal Error Rate (EER) metric, the standard evaluation metric in anti-spoofing research

- Real-time browser-based audio upload and microphone recording

- Spectrogram visualisation colour-coded per prediction result

- Flask REST API with a /predict endpoint accepting WAV, MP3, FLAC, OGG, M4A, and WebM files

- Shared preprocessing config saved as config.pkl to eliminate train-serve skew

- Four-page dark-themed web UI with Home, Detect, Process, and About pages

- Health check endpoint at /health returning model load status and architecture type

Model Architectures

The CNN model uses three convolutional blocks with batch normalisation, ReLU activation, and max pooling, followed by global average pooling and a dense classification head with dropout. It is fast, generalises well on small datasets, and learns local spectral patterns in the mel spectrogram.

The CNN+LSTM model extends this by reshaping the convolutional output and passing it through two LSTM layers before the classification head. This allows the model to capture temporal dynamics in the spectrogram, which is useful for detecting synthesis artefacts that change over time. Both models are trained with early stopping and a learning rate scheduler to avoid overfitting on the 100-file dataset.

Dataset Details

The project uses the Fake Audio dataset which contains 50 bonafide recordings and 50 spoof recordings across five matched speaker pairs. The spoof files are generated through wavelet-domain manipulation using the db10 wavelet at four decomposition levels. The training set is augmented to roughly 280 samples using noise injection, time shifting, and pitch shifting. The 70/15/15 train-validation-test split is stratified to maintain class balance.

Applications

- Real-time phone call screening for financial institutions and call centres

- Voice authentication security layer for banking and insurance applications

- Anti-fraud tooling for customer support pipelines where impersonation is a risk

- Academic research baseline for anti-spoofing and voice liveness detection

- Awareness and demonstration tool for cybersecurity training programmes

- Integration base for Twilio or Asterisk telephony platforms with live call monitoring

What You Get with This Project

The source code includes the complete Jupyter notebook with 10 annotated training cells, the Flask application with all four HTML templates, and a requirements file. After training, the notebook saves the best model as best_model.h5 and a config.pkl that ensures identical preprocessing during inference. The project runs locally on CPU in 5 to 20 minutes of training time and requires roughly 4 GB of RAM.

You can also get a pre-built project report, custom presentation slides, or a research paper draft through our research paper publishing service. If you need the project set up on your machine with a walkthrough of the code, the project setup and explanation service covers exactly that.

Who This Project Is For

This project works well for BCA, MCA, BTech CSE, and BSc IT students looking for a final year project in cybersecurity or deep learning. The combination of audio processing, two neural architectures, a REST API, and a full web UI gives you enough material to write a solid report and defend the project in front of an evaluation panel. Students who want a more unusual topic than the standard disease prediction or chatbot project will find this one stands out.

If you are comparing similar projects, take a look at the deepfake detection project and the PhishGuard AI phishing email detection project for related work in media authenticity and fraud detection.

Technical Specifications

- Language: Python 3.9 and above

- Deep Learning Framework: TensorFlow 2.12 and above with Keras

- Audio Processing: librosa 0.10, soundfile, resampy

- Web Framework: Flask 2.3

- Visualisation: Matplotlib, Seaborn

- Evaluation: scikit-learn (accuracy, F1, AUC, EER)

- Notebook: Jupyter with 10 structured cells

- Supported audio formats: WAV, MP3, FLAC, OGG, M4A, WebM

- Server port: 5001 (configurable)

- Model sizes: CNN approximately 2 MB, CNN+LSTM approximately 8 MB

Extra Add-Ons Available – Elevate Your Project

Add any of these professional upgrades to save time and impress your evaluators.

Project Setup

We'll install and configure the project on your PC via remote session (Google Meet, Zoom, or AnyDesk).

Source Code Explanation

1-hour live session to explain logic, flow, database design, and key features.

Want to know exactly how the setup works? Review our detailed step-by-step process before scheduling your session.

₹999

Custom Documents (College-Tailored)

- Custom Project Report: ₹1,200

- Custom Research Paper: ₹1000

- Custom PPT: ₹500

Fully customized to match your college format, guidelines, and submission standards.

Project Modification

Need feature changes, UI updates, or new features added?

Charges vary based on complexity.

We'll review your request and provide a clear quote before starting work.