AI After Life — AI-Powered Memorial Persona Chat System with Voice Cloning and WhatsApp Training

Create a lifelike digital persona of your loved ones using WhatsApp chats and voice recordings. AI After Life preserves their personality and voice, enabling natural conversations through text and speech.

Technology Used

Python | Django 4.2 | Django REST Framework | Groq API | LLaMA LLM | Coqui TTS XTTS v2 | Bark TTS | Celery | Redis | librosa | PyTorch | SQLite | Bootstrap 5 | JavaScript | Web Speech API | Pydub | Soundfile | Pandas | NumPy

Project Files

Losing someone close leaves a void that words cannot fill. AI After Life is a deeply personal Django-based web application that allows you to preserve the communication style, personality quirks, and even the voice of someone you care about. By training an AI persona on real WhatsApp chat exports and voice recordings, this system creates a digital companion that responds the way your loved one would have — complete with their tone, vocabulary, favorite phrases, and speaking rhythm.

This is not just another chatbot. AI After Life goes far beyond generic responses. It parses thousands of real messages, identifies personality traits, extracts communication patterns, and builds a linguistic profile that drives every response. When you add a voice recording, the system analyzes pitch, timbre, rhythm, and spectral features to clone their voice using state-of-the-art text-to-speech engines. The result is a conversational experience that feels genuine and deeply familiar.

How It Works

The entire workflow is designed to be simple for any user. You start by creating a named persona with relationship context — whether it is a parent, friend, partner, or mentor. Then you upload a WhatsApp chat export file and optionally a voice recording. The system runs a nine-step automated training pipeline that handles everything from message parsing to voice model generation. Once training completes, you can open a chat interface and begin having real conversations through text or voice.

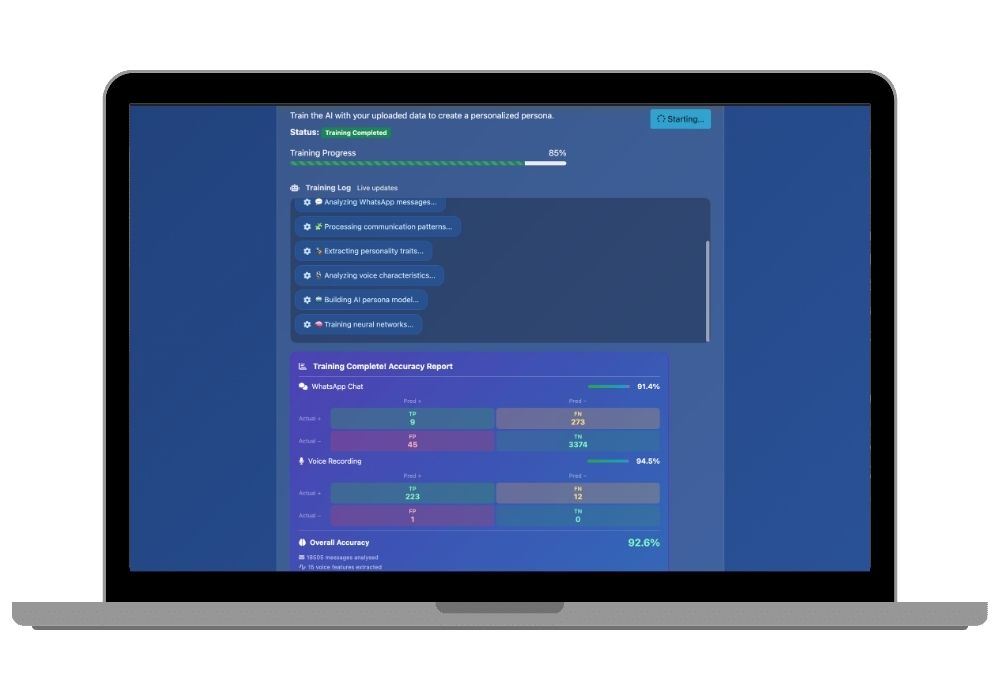

The training pipeline calculates a weighted accuracy score using confusion matrix metrics. Text analysis contributes sixty percent of the overall accuracy, while voice modeling contributes the remaining forty percent. This gives you a transparent understanding of how well the persona has been trained before you start chatting.

Key Features

AI After Life comes packed with features that make it a standout AI and machine learning final year project. The persona creation system supports multiple personas per user, each with its own training data and conversation history. The WhatsApp parser handles five different regional export formats including Android twelve-hour, Android twenty-four-hour, iOS bracket format, ISO date format, and European dot-separated format — so it works no matter where your WhatsApp was set up.

The voice cloning module supports two engines. Coqui TTS with XTTS v2 serves as the primary engine and delivers high-quality voice synthesis from short reference audio clips. Bark is available as an experimental alternative for general text-to-speech. If both engines fail, the system gracefully falls back to a silent response rather than crashing. Voice samples up to two minutes long are supported, though ten to thirty seconds of clear speech typically gives the best results.

The chat interface offers both text and voice modes. In text mode, you type messages and receive styled responses that match the persona's communication patterns. In voice mode, the browser-based Web Speech API captures your voice input, sends it to the AI for processing, and plays back the response in the cloned voice — complete with an animated microphone and sound wave visualizer that makes the experience feel alive.

Technology Deep Dive

The backend runs on Django 4.2 with Django REST Framework handling all API endpoints. AI response generation is powered by the Groq API, which provides ultra-fast inference using LLaMA-based large language models. Each response is styled according to the extracted personality profile, so the AI does not just answer questions — it answers them the way your person would have.

Audio processing relies on a powerful stack of libraries. Librosa handles feature extraction including MFCCs, spectral centroids, and chromagrams. PyTorch powers the deep learning components of voice analysis. Soundfile and Pydub manage audio file format conversions and manipulations. The entire audio pipeline works with WAV, MP3, and M4A formats out of the box.

The WhatsApp parser uses custom regex patterns to handle the messy reality of exported chat files. It strips system messages, identifies sender names, handles multiline messages, and correctly timestamps everything. The parsed data feeds into a communication analysis module that extracts vocabulary preferences, sentence structure patterns, emoji usage, response timing habits, and emotional tendencies.

On the frontend, Bootstrap 5 provides a clean and responsive interface that works across devices. Font Awesome icons add visual polish. The chat interface uses vanilla JavaScript with the Web Speech API for browser-native speech recognition and synthesis, meaning no additional plugins or services are required for voice interaction.

Training Pipeline Breakdown

The nine-step training process is fully automated and includes progress tracking so you can watch it work in real time. Step one initializes the pipeline. Step two loads all uploaded data files. Step three parses WhatsApp messages into structured records. Step four analyzes communication patterns across the entire chat history. Step five extracts personality traits like humor, formality, enthusiasm, and emotional range. Step six processes voice samples through spectral analysis. Step seven builds the voice synthesis model. Step eight finalizes the complete persona model by combining text and voice profiles. Step nine marks training as complete and reports the final accuracy score.

This structured approach makes the project extremely easy to present and explain during a final year project viva or demonstration. Every step has a clear purpose, and the accuracy metric gives evaluators a quantifiable measure of system performance.

Who Should Use This Project

AI After Life is ideal for students pursuing their final year project in artificial intelligence, natural language processing, deep learning, or human-computer interaction. It combines multiple advanced technologies — large language models, voice cloning, text analysis, and web development — into a single cohesive application. The emotional and practical relevance of the project makes it a compelling choice for presentations and research papers alike.

If you are looking for a project that demonstrates real technical depth while solving a genuinely meaningful problem, this is it. The combination of NLP, audio processing, and generative AI makes it suitable for BCA, MCA, BTech, BSc IT, and MSc Computer Science submissions. You can also explore our add-on services for custom project reports, research papers, PowerPoint presentations, and one-on-one mentorship to help you through your submission process.

Applications

Beyond academic use, this project has real-world applications in grief counseling and emotional support technology. Memorial AI services are an emerging field in the tech industry, and this project gives you a working prototype that demonstrates the core concepts. It can also be extended for corporate use cases like preserving institutional knowledge from retiring employees, creating interactive historical figures for educational platforms, or building personalized customer service agents trained on specific communication styles.

The modular architecture makes it straightforward to swap out components. You can replace the Groq API with OpenAI or a self-hosted model. You can upgrade the voice engine to newer versions as they release. You can add Telegram or Instagram message parsing alongside WhatsApp. The project is built to grow with your ambitions.

What You Get

When you purchase this project from CodeAj Marketplace, you receive the complete source code with all modules, a pre-built project report ready for submission, and full documentation covering installation, configuration, and usage. Our team also offers project setup with source code explanation so you understand every line of code before your viva. Need a custom version or additional features? Our mentorship program provides dedicated support until your project is successfully submitted.

Extra Add-Ons Available – Elevate Your Project

Add any of these professional upgrades to save time and impress your evaluators.

Project Setup

We'll install and configure the project on your PC via remote session (Google Meet, Zoom, or AnyDesk).

Source Code Explanation

1-hour live session to explain logic, flow, database design, and key features.

Want to know exactly how the setup works? Review our detailed step-by-step process before scheduling your session.

₹1499

Custom Documents (College-Tailored)

- Custom Project Report: ₹1,200

- Custom Research Paper: ₹1000

- Custom PPT: ₹500

Fully customized to match your college format, guidelines, and submission standards.

Project Modification

Need feature changes, UI updates, or new features added?

Charges vary based on complexity.

We'll review your request and provide a clear quote before starting work.